How Do I get All URLs From A Website (No Coding Needed)

Extracting urls from a website is a common issue, especially for the bigger ones. People might want to extract all the urls from their site because they want to move to a different domain. Another reason is that they might want to check a competitor’s website or they want to do some analysis.

Here is an approach I used combining different online tools to get all the urls from websites (bigger or smaller). I will explain each step in detail with screenshots.

1.) Find The sitemap Of The Website

2.) Gather all Sitemap Links (Posts, Categories, Pages, Products etc)

3.) Use An XML Sitemap Extractor For Each Link And Move The Results to a Document

If the above approach doesn’t work for you, i have some alternative options too, keep reading!

1.) Find The sitemap Of The Website

Every decent website has a sitemap because it helps with Google rankings and it is considered as an SEO good practice. The sitemap lists all the links a website has. So, we will try to find the exact address of this sitemap.

We will add some terms at the end of the website, for example, https://www.buycompanyname/*******. Try these options: sitemap, sitemap.xml, sitemap_index.xml, or sitemap.html. We will try to find the most detailed sitemap in case we notice 2 of them.

If you don’t see any results, try google search with these queries: site:example.com inurl:sitemap or site: example.com inurl:xml. Try to add “robots.txt” in the domain end if the previous approach doesn’t work. Sometimes you will find the sitemap there.

For instance, check what happens when I try the previous workflow for my site:

I see some options here but when I compare to my site total web pages it seems that I see fewer links.

Below i don’t see something so i move on to my next option.

Here I see a more detailed sitemap which seems exactly what I was looking for!

2.) Gather all Sitemap Links (Posts, Categories, Pages, Products etc)

The sitemap would be a bit different for each site. Some sitemaps will not have sublinks for page, categories etc at all. Some will categorize the links in a different way such as “products”.

In my case, i would extract links for these 4:

https://www.buycompanyname.com/post-sitemap.xml

https://www.buycompanyname.com/page-sitemap.xml

https://www.buycompanyname.com/category-sitemap.xml

https://www.buycompanyname.com/author-sitemap.xml

3.) Use An XML Sitemap Extractor For Each Link And Move The results To a Document

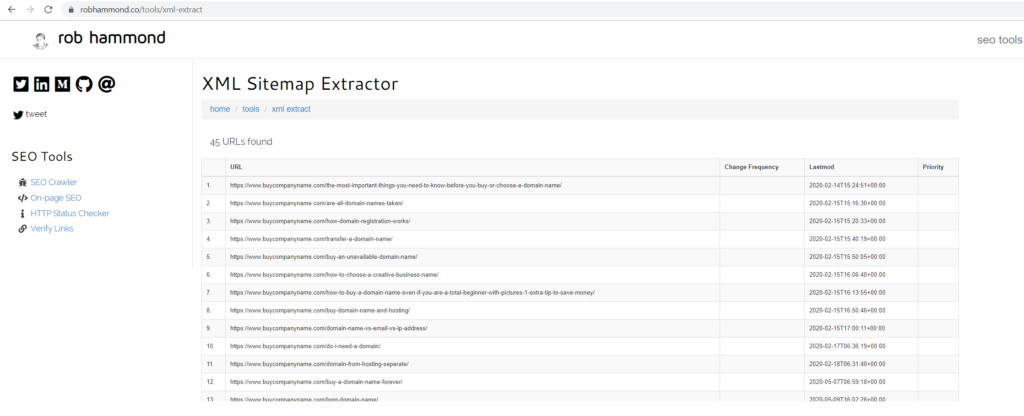

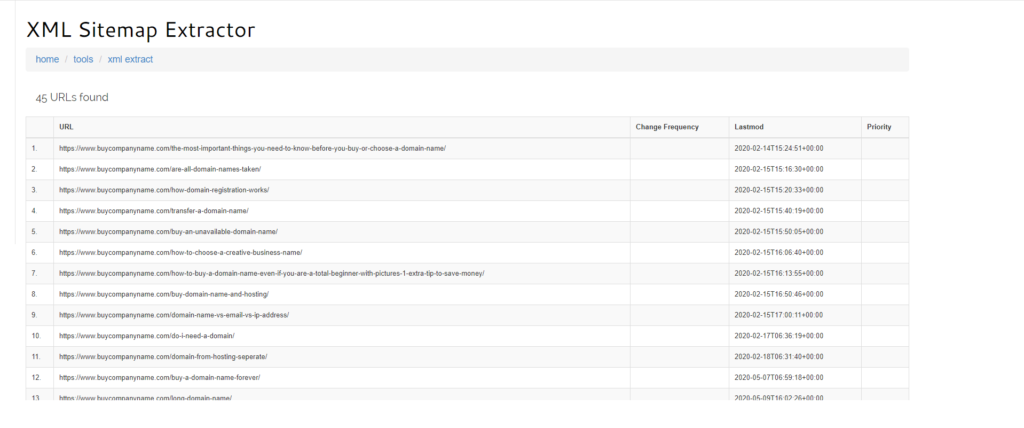

Now that you have the sitemaps links you can extract the urls for each link. This site works great: https://robhammond.co/tools/xml-extract

Run a report:

When you have the results, hold the shift button, use the left mouse click to choose the first result without leaving the shift button. Then release only the left mouse click and go to the final row by scrolling to the bottom. Just one more left-click to the last row and you are good to go! We copy like that so we can keep the format when we move the results to a file.

The last step is just to copy the results to the file format you prefer. Just right click > copy and paste where you want. In my case, I transferred the results to an excel document.

You should do the same process for the rest sitemaps links (if there are more).

You can even extract urls much faster with google sheets but if you target a really big site then the sheet will become unresponsive. I found that trick from this blog: https://www.mariolambertucci.com/3-ways-to-extract-urls-from-sitemaps/

Make a copy of this sheet: https://docs.google.com/spreadsheets/d/1-QiRWQVHqg7nL56Uwy_kqHt8i53oaq4yvG7duGIW3C4/edit#gid=1429691697 Replace B2 cell with the sitemap link.

Alternative Options

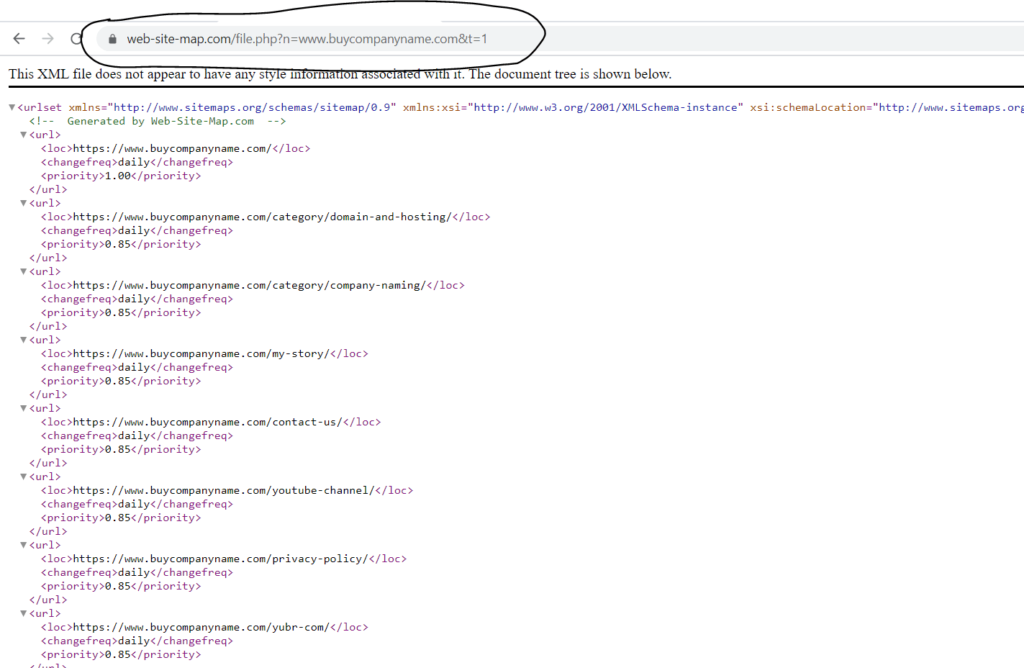

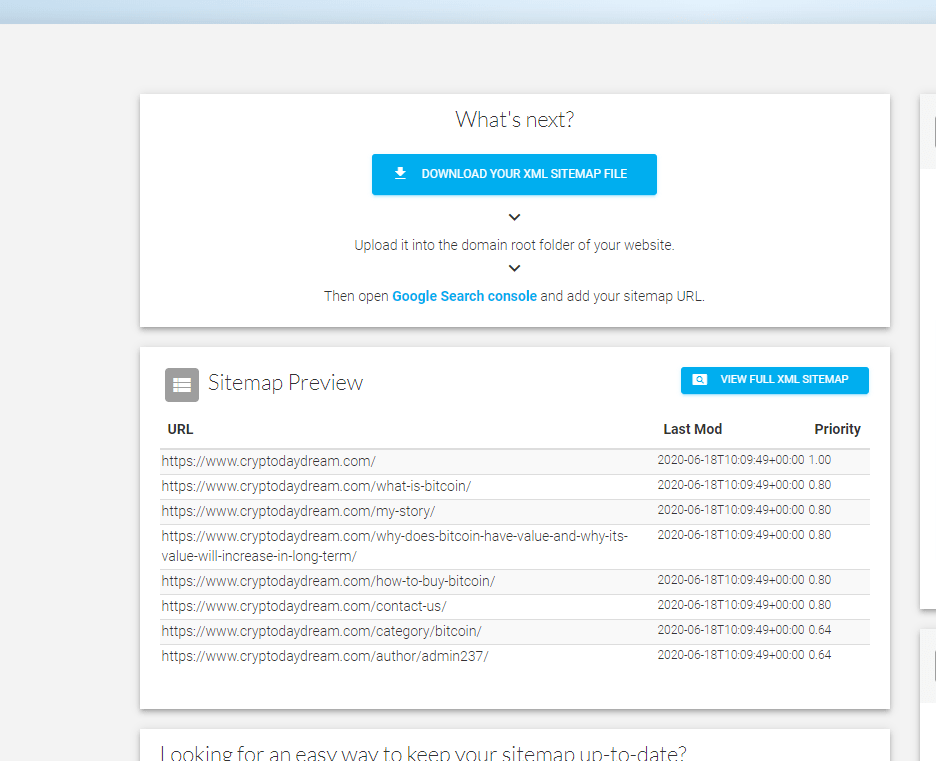

There is an easier way to extract URLs by using some tools that create a sitemap. The downside here is that you are limited on how many pages you can extract. This site https://www.web-site-map.com/ has a limit around 3500-5000 pages (depends on server load)

This is how you can use it:

Add the url and click on “Get free XML Sitemap”.

Click on the sitemap you created.

Grab the url you see on your browser.

Now we will use the same trick we did with google sheets on the previous paragraph:

Make a copy of this sheet: https://docs.google.com/spreadsheets/d/1-QiRWQVHqg7nL56Uwy_kqHt8i53oaq4yvG7duGIW3C4/edit#gid=1429691697 Replace B2 cell with the sitemap link.

**Credits to Mario Lambertucci for the above trick

Another alternative is https://www.xml-sitemaps.com/ but you have a limit of 500 pages. If you want to check a site with more than 500 pages then you need to upgrade to pro.

Final Words

I hope that the guide was helpful and if it was, please share on social media. It would be a huge help for my blog, thanks!